Fine-tuning vs. RAG: Which One Should You Actually Use?

You’ve got a super-smart AI model like GPT-4. It can write poems, draft emails, and even code a little.

But ask it about your company’s latest sales figures? Or your internal knowledge base? Crickets.

It knows everything about the public internet, but nothing about your private world.

So, how do you give it the specific knowledge it needs?

You'll hear two terms thrown around constantly: Fine-tuning and Retrieval-Augmented Generation (RAG). They both promise to make your AI smarter, but they work in completely different ways.

Choosing the wrong one is like trying to use a screwdriver to hammer a nail. You’ll waste time, a LOT of money, and end up with a mess.

Let's cut through the noise. We'll break down what they are, when to use each, and how to get started.

The Core Problem: Your AI Has Amnesia

A base Large Language Model (LLM) is like a brilliant new hire who just graduated from a top university. They have a massive amount of general knowledge from all the books they read (the internet data they were trained on).

But they have no idea what "Project Chimera" is, why Q3 revenue dipped, or how to access the employee benefits portal.

Your goal is to get this brilliant new hire up to speed on your company's specific information without sending them back to college for another four years.

This is where RAG and Fine-tuning come in.

What is RAG? (The Open-Book Exam)

Imagine you have to take a history test.

With the RAG approach, you’re allowed to bring the entire library with you into the exam.

You get a question: "What were the key economic factors leading to the fall of the Roman Empire?"

You don't know it off the top of your head. So you quickly run to the "Roman History" section of the library, grab the most relevant books, find the right pages, and then use that information to write a perfect answer.

That's Retrieval-Augmented Generation (RAG) in a nutshell. If you want to read further, do give a detailed blog about RAG a read here.

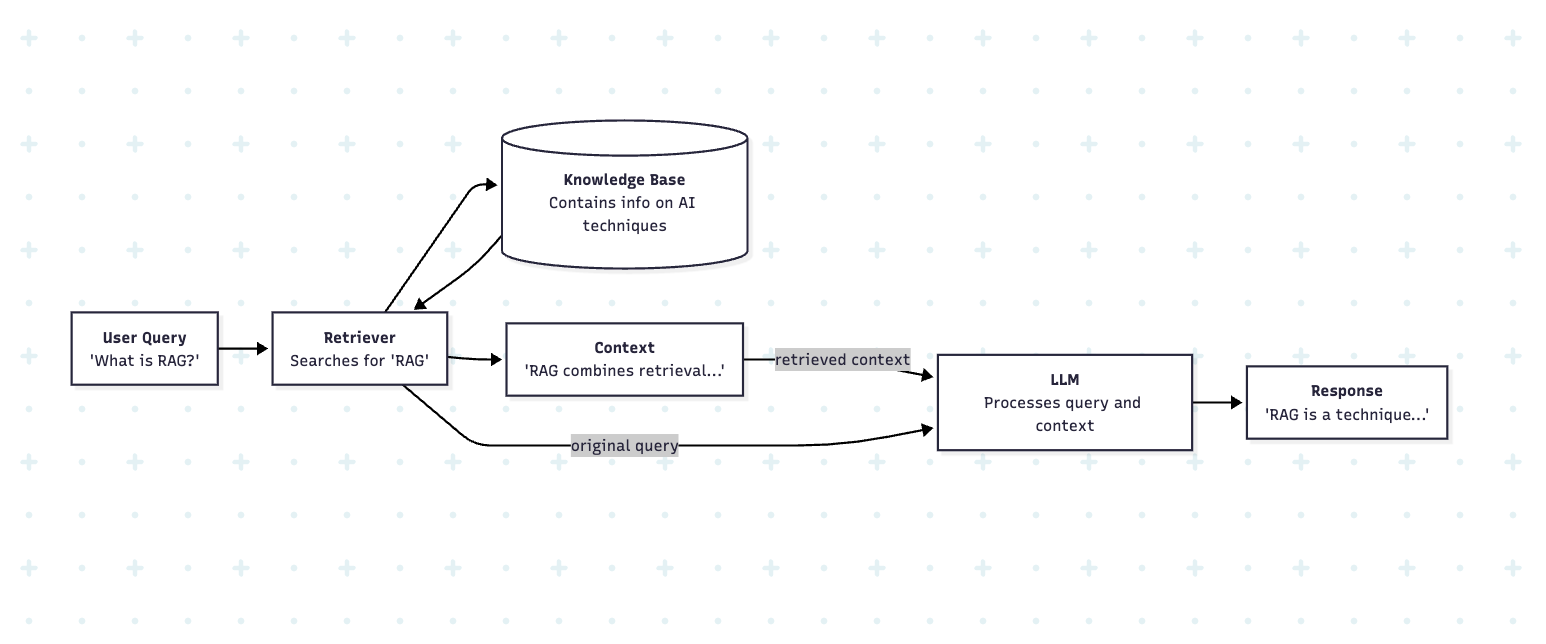

How does RAG actually work?

It’s a two-step dance:

- Retrieve (The Search): When you ask the AI a question (e.g., "What was our Q1 revenue in the APAC region?"), the system doesn't immediately ask the LLM. First, it searches your private knowledge base (your company's documents, databases, Confluence, etc.) to find the most relevant snippets of text.

- Augment & Generate (The Answer): It then takes your original question, "augments" it with the relevant information it just found, and hands it all to the LLM in one big package. The prompt looks something like this:

"Using the following information: [‘Q1 APAC revenue was $2.1M, driven by strong sales in Singapore...’], answer this question: What was our Q1 revenue in the APAC region?"

The LLM, now equipped with the right facts, can easily generate the correct answer.

The AI isn't using its own memory; it's using the information you just gave it.

Examples Where RAG is a No-Brainer

RAG shines when you need your AI to answer questions based on specific, factual, and often-changing information.

- Customer Support & Help Desks:

- Chatbots: A customer asks, "How do I return a product I bought last week?" The RAG system fetches the latest return policy from the knowledge base and provides an accurate, up-to-date answer.

- Support Agent Assist: A support agent gets a complex technical question. The system pulls relevant troubleshooting guides and past ticket solutions, giving the agent the exact info they need to solve the problem live.

- Internal Knowledge Management:

- HR & Onboarding: A new hire asks, "How many vacation days do I get?" The RAG system retrieves the answer directly from the employee handbook. No more bothering HR for simple questions.

- Sales Enablement: A salesperson is about to call a client and asks, "Give me a summary of our last 3 interactions with Client XYZ and any outstanding issues." The system pulls data from the CRM and past emails to provide a concise brief.

- Healthcare & Legal:

- Medical Research: A doctor asks, "What are the latest treatment protocols for adult-onset asthma?" The RAG system can search a private database of the latest medical journals and clinical trial results to provide a current, evidence-based answer.

- Legal Discovery: A paralegal needs to find all documents related to a specific case clause. The RAG system can scan thousands of legal documents in seconds to retrieve the relevant paragraphs.

What is Fine-Tuning? (The Closed-Book Exam)

Now, let's go back to that history test.

With the Fine-tuning approach, you spend weeks studying a specific textbook on Roman history. You read it over and over, memorizing the facts, the names, the dates, and even the author's style of writing.

When you get to the exam, you leave the book outside. You answer the questions based on the knowledge that is now ingrained in your brain. You've fundamentally changed your own internal memory.

That's Fine-tuning.

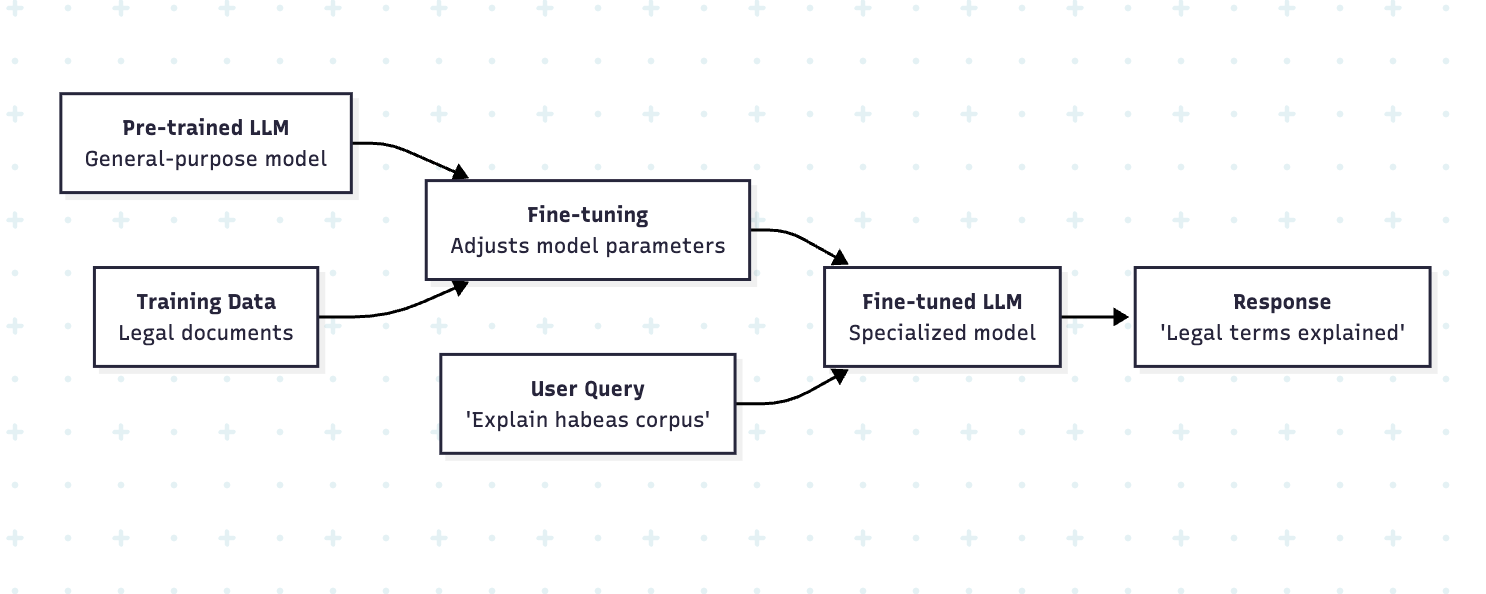

How does fine-tuning actually work?

Fine-tuning takes a pre-trained LLM and continues the training process, but on a much smaller, specific dataset. You aren't teaching it a new skill from scratch; you're adjusting its existing knowledge and behavior.

You feed it hundreds or thousands of examples of the kind of output you want. For instance, if you want it to be a master at writing marketing copy in your brand's voice, you'd feed it 500 examples of your best-performing ads.

The model adjusts its internal parameters (its "weights") to better mimic the style, tone, and structure of the examples you provided.

You are changing the model's core behavior. It's not looking up information; it is the information.

Examples Where Fine-Tuning is Essential

Fine-tuning is for when you need to change the how—the style, personality, or format of the AI's output.

- Brand Voice & Marketing:

- Content Creation: Your brand has a very specific, quirky, and fun voice. You can fine-tune a model on all your past blog posts and social media updates. Now, when you ask it to "draft a tweet about our new product," it will do so in your exact brand voice, not a generic one.

- Ad Copy Generation: A marketing agency wants to generate high-converting Facebook ad copy. They can fine-tune a model on their 1,000 best-performing ads from the past. The model learns the structure and persuasive language that works for their audience.

- Specialized Industries:

- Medical Scribe: You want an AI that listens to a doctor-patient conversation and writes a medical summary in the specific format required for electronic health records (EHR). You'd fine-tune it on thousands of example conversations and their corresponding formatted summaries.

- Code Generation: A company uses a proprietary programming language. They can fine-tune a model on their entire codebase. The model learns the syntax and best practices of that specific language, making it a useful coding assistant for their developers.

- AI Personality & Character:

- Chatbot Persona: You want to create a chatbot that acts as a sassy, sarcastic sommelier for a wine app. You can't get that personality from a knowledge base. You need to fine-tune the model on dialogue examples that capture that specific character.

- Summarization Style: You need summaries of legal documents that are extremely concise and only include risks and liabilities. A generic summary won't work. You fine-tune the model on thousands of legal docs and their corresponding "risk-only" summaries.

RAG is for What, Fine-Tuning is for How

This is the single most important takeaway:

Use RAG to teach your AI what to say. Use Fine-tuning to teach it how to say it.

- If your problem is that the AI doesn't know the answer (it's a knowledge gap), you need RAG. You're giving it an open book to find the facts.

- If your problem is that the AI knows the facts but can't behave the way you want (it's a style, format, or personality gap), you need Fine-tuning. You're sending it to finishing school to learn new manners.

The Nitty-Gritty: A Head-to-Head Comparison

| Feature | RAG (Retrieval-Augmented Generation) | Fine-Tuning |

|---|---|---|

| Core Purpose | Accessing external, up-to-date knowledge. | Modifying the model's core behavior, style, or format. |

| Analogy | Open-book exam | Closed-book exam (after studying) |

| Data Needs | Your knowledge base (Docs, PDFs, DBs). | A curated dataset of 100s-1000s of high-quality examples. |

| Cost | Lower. Primarily inference and vector DB costs. | Higher. Requires significant compute for the training process. |

| Data Freshness | Dynamic. Super easy to update. Just add/change a doc in the knowledge base. | Static. If your info changes, you have to re-run the entire fine-tuning process. |

| Hallucinations | Lower risk. The model is "grounded" by the provided facts. It can cite its sources. | Higher risk. The model is generating from memory and can still make things up. |

| Implementation | Easier. Many off-the-shelf tools and frameworks exist (e.g., LangChain, LlamaIndex). | Harder. Requires data prep, hyperparameter tuning, and ML expertise. |

| Interpretability | High. You can see exactly which document the AI used to get its answer. | Low. It's a black box. You don't know why it answered the way it did. |

Let's Talk About the Tough Stuff

Cost

- RAG: Cheaper to get started. Your main costs are for storing your data in a special database (a "vector database") and paying for API calls each time a user asks a question.

- Fine-tuning: Can be expensive. You're paying for powerful GPUs to run the training process, which can take hours or even days. It's a significant upfront investment.

Is your data static or dynamic?

- If your information changes daily or even weekly (e.g., product inventory, company policies, news), RAG is your only sane option. Updating a fine-tuned model is too slow and expensive.

- If your goal is to teach a timeless skill or style (e.g., your brand voice, how to format a legal brief), Fine-tuning works well because that "knowledge" doesn't expire.

Hallucinations

Every AI can "hallucinate" or make things up.

- RAG dramatically reduces this risk because it forces the model to base its answer on the documents you provide. If the answer isn't in the docs, the model is more likely to say "I don't know."

- Fine-tuning can sometimes increase the risk of subtle hallucinations. The model might blend facts from its original training with what it learned from you, creating plausible but incorrect information.

Is There a Best of Both Worlds? (Introducing RAFT)

What if you need both? What if you want an AI that can access up-to-date information (RAG) and has learned a specialized skill (Fine-tuning)?

This is where the cutting edge is heading. A new technique called RAFT AI (RAG-Fine-Tuning Hybrid) combines both approaches to unlock the best of Retrieval-Augmented Generation and Fine-Tuning.

The idea is to fine-tune the model specifically to be better at answering questions using documents it has never seen before. You train it to ignore its own internal knowledge and pay closer attention to the information provided in the prompt.

It's like teaching a student how to be an expert "open-book exam taker."

This is an advanced topic, but it shows that the line between RAG and Fine-tuning is starting to blur.

I'm a Beginner. Where Should I Start?

Start with RAG. Period.

Here’s why:

- It’s Cheaper & Faster: You can build a proof-of-concept RAG system in an afternoon.

- It Solves More Problems: Most business problems are knowledge gap problems, not style problems.

- It’s More Controllable: You have direct control over the information your AI uses.

Build a RAG system first. See how far it gets you. Only when you hit a wall that RAG can't solve—like needing a very specific personality or output format—should you even begin to consider the cost and complexity of fine-tuning.

Ready to build your first RAG application? We have a step-by-step tutorial on n8n that will get you a hang of it. Link to the Workflow

Conclusion: The Right Tool for the Job

Choosing between RAG and Fine-tuning isn't about which one is "better." It's about understanding your specific problem.

- Need to answer questions from a specific knowledge base? Use RAG.

- Need to change the AI's fundamental behavior, style, or voice? Use Fine-tuning.

By picking the right tool, you'll save yourself a world of pain and build something that actually works. Now go give your AI the brain it needs.