You see the names everywhere. A new AI model drops and the internet goes wild. But they're not all the same.

Think of it like cars. You have sedans, SUVs, and sports cars. They all drive, but you use them for different things. LLMs are similar.

Let's break down the 7 key ways they're classified. No jargon, just the stuff that matters.

If you’re new to how LLMs process and count text internally, our LLM Tokens Explained: Beginner’s Guide is a perfect starting point. It breaks down how tokens work—the basic units every language model uses to understand and generate text.

Types of LLMs

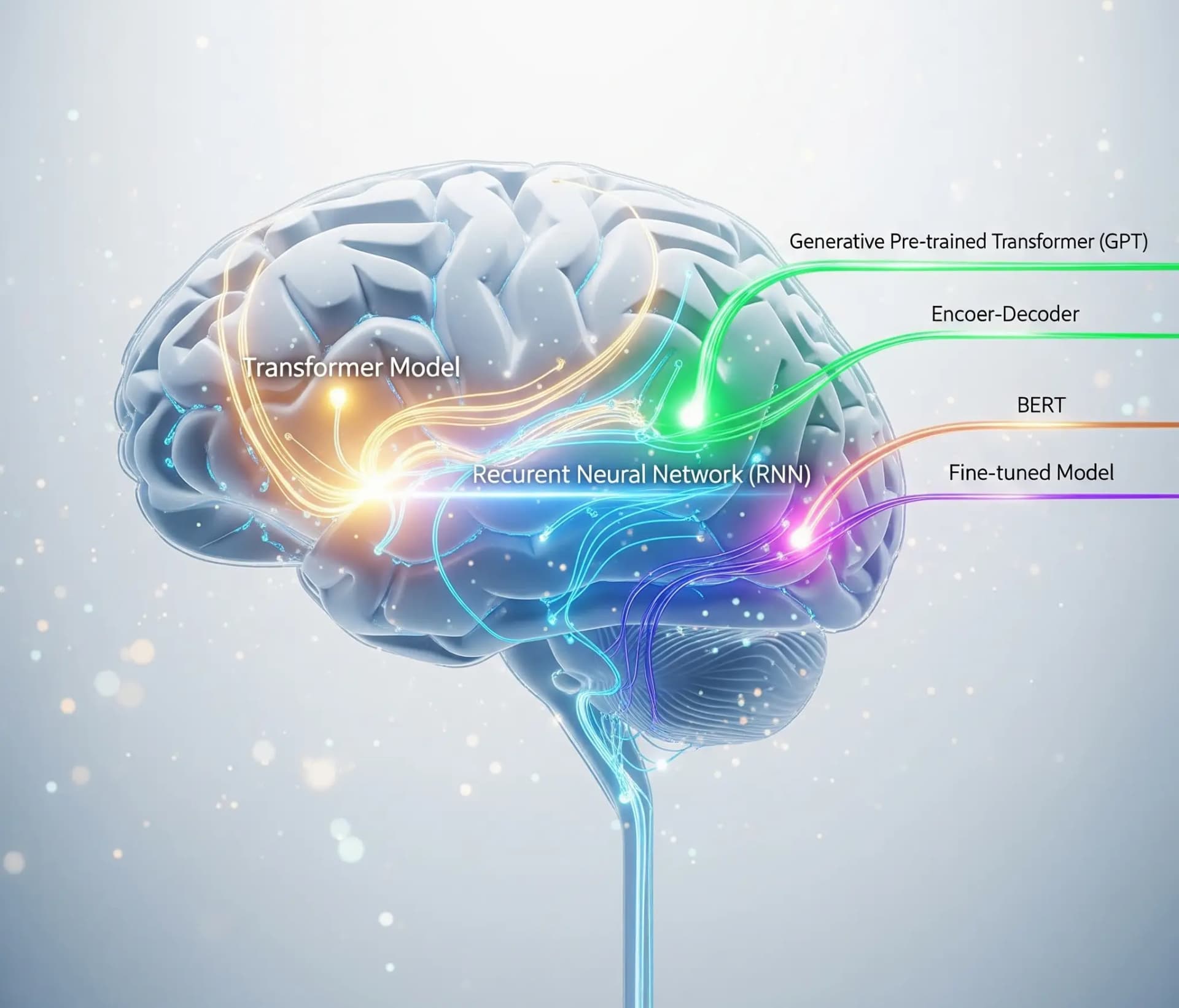

1. Architecture-Based Classification (The "Engine" Type)

This is about how the model "thinks" and processes information.

Autoregressive Models

Imagine writing a story one word at a time, where each new word is based on the ones before it. That's an autoregressive model. They're amazing for generating creative text, like writing an email or a poem.

Example: Most models you interact with, like OpenAI's GPT series or Anthropic's Claude, are autoregressive. They predict the next logical word in a sequence.

Autoencoding Models

These models are like detectives. They look at a whole sentence, "mask" a word, and then use the surrounding context (from both before and after the word) to guess what it is. This makes them incredible at understanding the deep meaning and context of a text.

Example: Google's BERT, a famous autoencoding model, excels at tasks like sentiment analysis or powering more accurate search results.

Seq2Seq Models (Sequence-to-Sequence)

These are the translators. They take an input sequence (like a sentence in English) and convert it into a different output sequence (the same sentence in French). They have two parts: an encoder that understands the input and a decoder that generates the output.

Example: This architecture is the backbone of services like Google Translate and is perfect for summarizing long documents into a few bullet points.

2. Availability-Based Classification (The "Keys" to the Car)

Who gets to use the model and how?

Open Source

The blueprints are public. Anyone can download, modify, and build on these models for free. This drives innovation and lets developers create highly custom solutions.

Example: Meta's Llama 3 and Mistral AI's models are largely open source, allowing anyone to run them on their own hardware.

Proprietary Models

These are owned and controlled by a single company. You typically access them through a paid API. They are often highly polished and powerful right out of the box.

Example: OpenAI's GPT-4o and Anthropic's Claude 3 Opus are proprietary.

3. Scale-based Classification (The "Size" of the Engine)

Size often correlates with power (and cost). Parameters are the internal variables the model learns during training.

Small

Designed to run on local devices like your laptop or phone. Fast and efficient for specific tasks.

Example: Microsoft's Phi-3 and Google's Gemma are small language models (SLMs) that can run directly on a personal computer.

Medium

A balance of power and efficiency. Good for many business applications without needing massive computing power.

Example: Llama 3's 8B (8 billion parameter) version is a popular medium-sized model.

Large

The giants. These models have hundreds of billions (or more) parameters and require huge data centers to run. They offer state-of-the-art performance on the most complex reasoning tasks.

Example: GPT-4o and Claude 3 Opus are considered large, frontier models.

4. Classification by Modality (What it "Sees" and "Hears")

Modality refers to the type of data the model can understand.

Unimodal

These models handle only one type of data, usually text.

Example: Early models like GPT-2 were unimodal; they could only read and write text.

Multimodal

The new standard. These models can process and understand information from multiple sources at once—text, images, audio, and even video.

Example: You can show a model like Gemini 1.5 Pro a picture of your refrigerator's contents and ask, "What can I make for dinner?" It understands the image and the text to give you a recipe.

5. Primary Classification: Application Scope (The "Job" it's Built For)

Is it a jack-of-all-trades or a specialist?

General Purpose

These are designed to handle a massive range of tasks—from writing and coding to analysis and conversation.

Example: ChatGPT and Claude are classic general-purpose models.

Specialized LLMs

These are fine-tuned to excel at one specific thing.

- Domain-Specific: Trained on data from a particular field to provide expert-level knowledge. Example: BloombergGPT is trained on financial data to assist with market analysis.

- Task-Specific: Built for a single function. Example: DeepSeek-Coder is optimized purely for writing and understanding computer code.

6. Interaction Style (How it "Learns" to Behave)

This is about the final training step that makes a model useful for conversation.

Base Model

This is the raw, pre-trained model. It's incredibly knowledgeable from reading the internet, but it's not great at following instructions. It's more of a powerful text-completion engine.

Example: Developers might download the Llama 3 Base model to then fine-tune it for their own specific application.

Instruction Tuned

This is a base model that has gone through a second training phase. It's been taught with examples of instructions and good answers, often with human feedback (a process called RLHF). This is what makes a model a helpful assistant. Most of the companies release their instruction tuned models. As can be seen in the table below.

Example: ChatGPT and Llama 3 Instruct are the instruction-tuned versions of their respective base models.

7. Cognitive Style (How it "Solves" Problems)

This describes the model's approach to reasoning.

Reasoning Models

These models are designed to break down complex, multi-step problems. They can generate a "chain of thought" to show their work before giving a final answer, which is crucial for math, logic puzzles, and planning.

Example: DeepSeek-R1 is a model specifically built to "think before it speaks," generating an internal monologue to reason through a problem.

Zero/Few-Shot Learning

This is a key capability of modern LLMs.

- Zero-Shot: You can ask it to do something it's never been explicitly trained for, and it can figure it out. Example: "Classify this movie review as positive or negative."

- Few-Shot: You give it just a few examples to show it what you want, and it instantly learns the pattern. Example: "Translate these English words to French: sea -> mer, sun -> soleil, moon -> ???"

Who's Who: The Major AI Models Classified

Here’s a more detailed look at the key models from the major players:

| Company | Model Family / Specific Model | Architecture | Availability | Scale | Modality | Application Scope | Interaction Style | Cognitive Style |

|---|---|---|---|---|---|---|---|---|

| OpenAI | GPT-4o / 4.1 / 4.5 | Autoregressive | Proprietary | Large | Multimodal | General Purpose | Instruction Tuned | General / Reasoning |

| GPT-o1 / o3 | Autoregressive | Proprietary | Medium/Large | Text/Multimodal | Specialized | Instruction Tuned | Reasoning-Focused | |

| Sora | Autoregressive | Proprietary | Large | Multimodal (Video) | Specialized | Instruction Tuned | Generative | |

| GPT-OSS | Autoregressive | Open-Weight | Small & Large | Text | General Purpose | Instruction Tuned | General / Reasoning | |

| Gemini 2.0 / 2.5 | Autoregressive | Proprietary | Small to Large | Multimodal | General Purpose | Instruction Tuned | Reasoning-Enhanced | |

| Gemma / 2 / 3 | Autoregressive | Open-Weight | Small to Medium | Text/Multimodal | General Purpose | Instruction Tuned | General | |

| Meta | Llama 2 / 3 | Autoregressive | Open-Weight | Small to Large | Text | General Purpose | Instruction Tuned | General |

| Llama 3.1 / 4 | Autoregressive (MoE) | Open-Weight | Very Large | Multimodal | General Purpose | Instruction Tuned | General | |

| Anthropic | Claude 3 / 3.5 / 3.7 | Autoregressive | Proprietary | Small to Large | Multimodal | General Purpose | Instruction Tuned | Reasoning-Enhanced |

| Claude 4 | Autoregressive | Proprietary | Large | Multimodal | General Purpose | Instruction Tuned | Advanced Reasoning | |

| DeepSeek | DeepSeek-V3 | Autoregressive (MoE) | Open-Weight | Very Large | Multimodal | General Purpose | Instruction Tuned | General |

| DeepSeek-R1 | Autoregressive (MoE) | Open-Weight | Very Large | Multimodal | Specialized | Instruction Tuned | Reasoning-Focused | |

| Distilled R1 Models | Autoregressive | Open-Weight | Small | Text | Specialized | Instruction Tuned | Reasoning-Focused | |

| Mistral | Mistral Large 2 | Autoregressive | Proprietary | Large | Multimodal | General Purpose | Instruction Tuned | General |

| Mistral Small 3 | Autoregressive | Open-Weight | Small | Multimodal | General Purpose | Instruction Tuned | General | |

| Codestral | Autoregressive | Open-Weight | Medium | Text | Specialized | Instruction Tuned | Code-Focused |

Ready to See for Yourself?

Now you know the difference between a multimodal, open-source model and a proprietary, domain-specific one.

The next time you read about a new AI release, you won't just see a name, you'll understand its DNA.

Want to take the next step in customizing how your model performs? Read our detailed comparison on RAG vs. Fine-Tuning: When to Use Each to understand which approach helps you get the most from your LLM.

Until then…